AI chip ambition is easy to celebrate at the prototype stage. It becomes harder when wafers turn into shipments, and shipments must convert into revenue. As FuriosaAI moves its second-generation inference chip into broader production and distribution, Korea’s AI semiconductor narrative shifts from symbolic milestones to operational execution.

FuriosaAI Expands RNGD Production and HBM3E-Based Lineup While Preparing Series D Funding

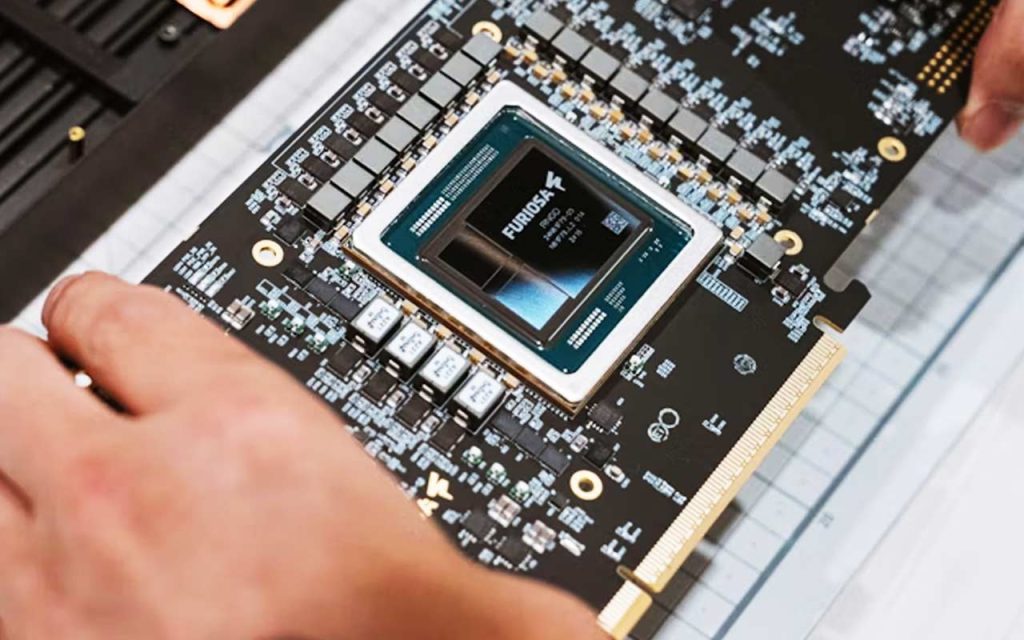

FuriosaAI has begun scaling production of its second-generation neural processing unit (NPU), Renegade (RNGD), and is preparing derivative products based on upgraded memory configurations.

According to semiconductor industry sources, the company plans to mass-produce “Renegade+ Max” in the second half of this year. The configuration integrates two Renegade+ chips on a single PCIe card and incorporates four units of HBM3E memory, raising total memory capacity to an estimated 144GB. The existing Renegade uses two HBM3 units with 48GB capacity, while Renegade+ upgrades to HBM3E and approximately 72GB.

FuriosaAI previously confirmed that it began mass-producing 4,000 RNGD chips using TSMC’s 5-nanometer process earlier this year. Monthly output of the second-generation chip currently stands at around 1,000 units, with plans to expand to 2,000–3,000 units per month by year-end. The company has set an annual production target of 20,000 units.

In parallel, FuriosaAI is pursuing a Series D round reportedly targeting up to KRW 700 billion, with Mirae Asset Securities and Morgan Stanley serving as lead managers. Company representatives have indicated that proceeds will support additional mass production, global sales expansion, and third-generation chip research and development. An IPO is being considered in the 2027–2028 timeframe.

On the commercial front, IT infrastructure firm SysOne has signed a public-sector master distribution agreement to supply RNGD cards and NXT RNGD servers to Korean government institutions preparing AI inference deployments.

CEO Baek Joon-ho stated at the LG CNS AI Tech Summit 2026 that NPU products are entering the market in earnest as infrastructure and software maturity improve.

The CEO noted that while NVIDIA GPUs often operate in the 700–1000W range, Renegade targets approximately 200W power consumption, positioning it for deployment in existing air-cooled data center environments.

Baek also confirmed that FuriosaAI is building reference data centers in Korea, the United States, Portugal, and Malaysia to support customer validation.

Korea’s AI Semiconductor Narrative Moves From Symbolism to Shipment

Until recently, FuriosaAI’s story was anchored in technology validation and capital positioning. Production scale alters that framing.

A target of 20,000 chips per year may not rival hyperscale volumes, yet within the context of Korea’s fabless AI chip ecosystem, it represents a shift from development-stage rhetoric to commercial supply discipline. That distinction matters for venture investors, policy architects, and enterprise buyers evaluating whether domestic AI semiconductors can transition beyond pilot programs.

Public-sector distribution through SysOne further signals that inference deployment is no longer limited to research institutions. Government and enterprise infrastructure procurement introduces procurement cycles, compliance standards, and long-term service commitments—factors that tend to reveal operational maturity.

At the same time, the Series D round reflects capital intensity rather than optional expansion. Semiconductor scale demands synchronized funding, manufacturing, and customer onboarding. The fundraising itself becomes part of the commercialization process.

The Real Constraint: Scale, Margins, and Hyperscale Adoption Uncertainty

Production capacity does not automatically translate into durable demand.

Industry observers have pointed out that the decisive variable is not theoretical power efficiency but measurable advantage against established GPU systems in rack-level throughput and operating economics. Demonstrating parity in lab benchmarks differs from displacing entrenched vendor ecosystems in hyperscale data centers.

FuriosaAI has confirmed collaboration and testing with domestic enterprise clients and reference deployments overseas. Yet expansion into U.S. or Middle Eastern hyperscale operators remains uncertain, according to industry commentary. Customer concentration risk and pricing pressure in a competitive inference accelerator market cannot be dismissed.

There is also the margin question. As competition intensifies in AI inference hardware, cost structures become exposed. If price concessions are required to secure anchor customers, profitability timelines may stretch, affecting both IPO valuation narratives and early investor exit strategies.

This is not a critique of execution. It reflects the structural reality of semiconductor commercialization, where design success is only one variable among supply chain coordination, software optimization, and long-term procurement alignment.

What RNGD Scaling Enables — and What It Still Cannot Guarantee

Expanded RNGD production and HBM3E-based upgrades strengthen FuriosaAI’s position in enterprise inference workloads that prioritize power efficiency and memory bandwidth.

The public-sector channel through SysOne potentially opens stable domestic demand and reference case accumulation. Reference data centers abroad provide controlled environments to accelerate validation cycles with global partners.

Yet none of this guarantees displacement of incumbent GPU ecosystems. NVIDIA’s advantage lies not only in silicon but in software tooling, developer familiarity, and long-term procurement integration. Even if inference markets diversify, the transition is likely to be gradual.

FuriosaAI’s roadmap toward a third-generation chip within two years indicates strategic continuity. However, each generational jump increases capital requirements and execution complexity.

What Global Founders and Investors Should Watch in Korea’s AI Chip Market

For international stakeholders, the signal extends beyond one company.

Korea’s AI semiconductor ecosystem now presents a test case in mid-stage deep-tech scaling: early public and private capital helped bridge the “Death Valley” between prototype and mass production. The next phase evaluates whether commercial traction can sustain independent growth without acquisition.

Global founders building inference-optimized models may see potential in alternative accelerator ecosystems if performance-per-watt metrics hold under production conditions. Institutional investors should monitor customer diversification, not headline production targets.

Cross-border partners evaluating Korea as an AI infrastructure base should examine reference deployments and public-sector adoption patterns. These early signals often precede broader market normalization.

Korea’s AI Semiconductor Strategy Now Faces Its First Operational Audit

Engineering confidence carried FuriosaAI through its development years. Commercial credibility will be earned differently.

When chip shipments rise, expectations rise with them. Capital markets reward scale only when scale proves repeatable and profitable. Korea’s ambition to build an AI semiconductor foothold will be judged less by announcements and more by procurement renewals, margin resilience, and international client retention.

The coming 12–24 months will clarify whether domestic AI accelerators become a structural layer in global inference infrastructure—or remain a compelling but contained alternative.

Key Takeaway on FuriosaAI and RNGD Chip Commercialization

- FuriosaAI has begun scaling mass production of its second-generation RNGD NPU, targeting 20,000 units annually.

- The company plans HBM3E-based Renegade+ and Renegade+ Max products to increase memory capacity and enterprise competitiveness.

- A public-sector distribution agreement with SysOne expands domestic deployment channels beyond pilot programs.

- Series D fundraising aims to finance production expansion and third-generation chip R&D ahead of a potential 2027–2028 IPO.

- Commercial scale, margin sustainability, and hyperscale customer diversification remain the decisive variables in Korea’s AI semiconductor trajectory.

🤝 Looking to connect with verified Korean companies building globally?

Explore curated company profiles and request direct introductions through beSUCCESS Connect.

– Stay Ahead in Korea’s Startup Scene –

Get real-time insights, funding updates, and policy shifts shaping Korea’s innovation ecosystem.

➡️ Follow KoreaTechDesk on LinkedIn, X (Twitter), Threads, Bluesky, Telegram, Facebook, and WhatsApp Channel.